Scale from NVIDIA MGX™ building blocks to NVIDIA HGX™-class training platforms—with air, ORv3, and immersion-ready options.

ContactScale-up performance

From NVIDIA MGX™ GPU servers to NVIDIA HGX™-class systems for large-model training.

Deployment flexibility

EIA 19" and ORv3 options to match datacenter standards.

Thermal headroom

Air + immersion cooling options for dense GPU configurations.

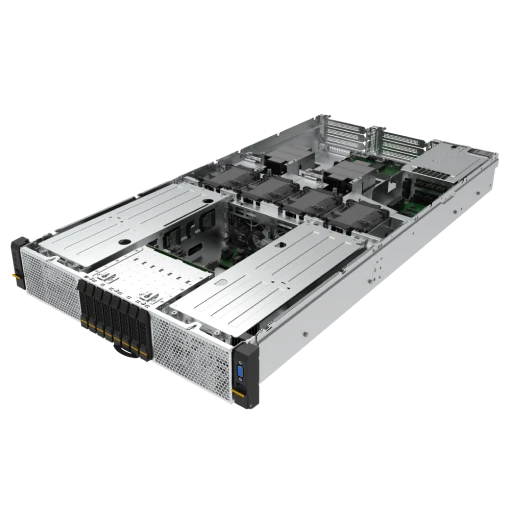

SG231-2-L1

2U DLC NVIDIA Rubin NVL8 platform for next‑gen AI training

- 8× NVIDIA Rubin NVL8 GPUs (SXM) with NVLink

- 6th‑gen NVLink fabric for scale‑up multi‑GPU

- 2U single‑phase DLC for dense, efficient AI compute

SGX30-2

8x NVIDIA Blackwell Ultra GPUs server for extreme AI training

- Up to 8x NVIDIA Blackwell Ultra GPUs

- Dual Intel Xeon 6 for balanced CPU + I/O throughput

- Scale-up fabric: NVSwitch + NVLink + PCIe integrated

SX420-2A

4U NVIDIA MGX™ platform on AMD EPYC for flexible 8‑GPU AI

- NVIDIA MGX™ reference platform on 2x AMD EPYC™ 9005

- Up to 8x H200 NVL, L40S or RTX PRO Blackwell GPUs

- Configurable storage: up to 16x E1.S/E3.S or 12x U.2

OX420-2A

ORv3 NVIDIA MGX™ 4U accelerated server for hyperscale AI racks

- OCP ORv3 form factor for 21" hyperscale racks

- NVIDIA MGX™ + 2x AMD EPYC™ 9005 with 8 GPU options

- Front I/O + serviceability optimized for fleet deployment

SG323-2A-I

2U 8-GPU immersion-cooled server for sustained AI loads

- NVIDIA MGX™ reference platform on 2x AMD EPYC™ 9005

- Up to 8x H200 NVL, L40S or RTX PRO Blackwell GPUs

- Configurable storage: up to 16x E1.S/E3.S or 12× U.2

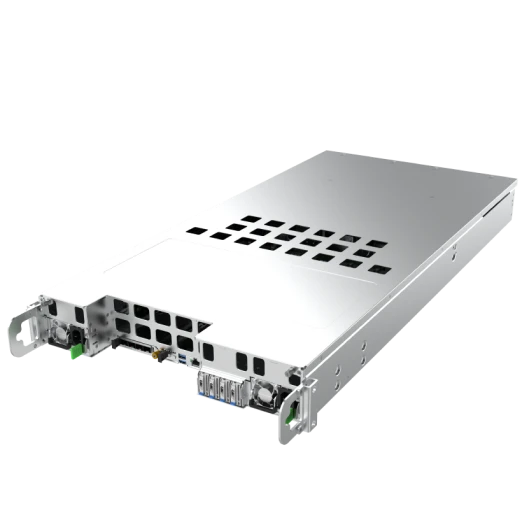

SG223-2A-I

3U 8-GPU immersion-cooled server for sustained AI loads

- Immersion cooling maximizes GPU clocks under heavy loads

- Up to 8x PCIe GPUs: AMD MI210 or NVIDIA H100 NVL

- 2x AMD EPYC™ 9004/9005 with up to 6TB memory

Service

Get the latest on AI infrastructure

Contact